As technology continues to evolve, artificial intelligence (AI) is becoming increasingly prevalent in our everyday lives. From voice assistants to self-driving cars, AI has the potential to revolutionize the way we live and work. However, with this increased reliance on AI, there is also an increased risk of a breach on its safety layer. In this article, we will explore just how crucial a breach on AI’s safety layer can be, the potential consequences, and the measures that can be taken to prevent it.

What is AI’s safety layer?

AI’s safety layer is a set of protocols and measures that are designed to ensure that AI systems operate safely and predictably. It is the set of safeguards put in place to prevent unintended consequences of AI systems, such as malfunctioning or misbehaving in ways that could cause harm to humans or the environment.

The safety layer of AI consists of several components, including the ability to detect and respond to unexpected situations, error correction mechanisms, and the ability to halt operation in cases of emergency.

How crucial is AI’s safety layer?

The importance of AI’s safety layer cannot be overstated. AI systems have the potential to affect every aspect of our lives, from transportation to healthcare to finance. If an AI system malfunctions or behaves in a way that is unexpected, the consequences can be catastrophic.

For example, imagine a self-driving car that is not properly programmed to detect pedestrians. If the car were to malfunction and hit a pedestrian, it could result in injury or even death. Similarly, a malfunctioning AI system in a hospital could lead to incorrect diagnoses or treatment, potentially putting patients’ lives at risk.

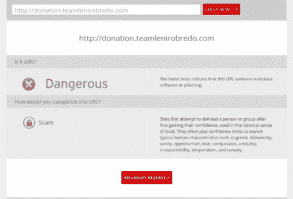

The potential consequences of a breach on AI’s safety layer are not limited to physical harm. There is also the risk of financial and reputational damage. For instance, a data breach on an AI system caused by a messed AI’s safety layer, it will be very serious as sensitive data may be leaked willingly by your AI to hackers.

Preventing a breach on AI’s safety layer

Preventing a breach on AI’s safety layer is essential to ensuring the safe and responsible use of AI technology. There are several measures that can be taken to prevent breaches, including:

- Designing AI systems with safety in mind – AI systems should be designed with safety as a top priority. This means that the safety layer should be incorporated from the beginning of the design process.

- Regular testing and maintenance – Regular testing and maintenance can help to identify and prevent potential safety issues before they become problems.

- Monitoring and response – AI systems should be constantly monitored for unexpected behavior, and there should be mechanisms in place to respond to unexpected situations.

- Transparency – AI systems should be transparent, with clear documentation and explanations of how they operate. This can help to build trust and ensure that the system is being used in a responsible and safe manner.

- Collaboration and regulation – Collaboration between different organizations and industries can help to ensure that AI systems are being developed and used in a safe and responsible way. Additionally, government regulation can provide a framework for the safe and responsible use of AI technology.

Conclusion

AI technology has the potential to revolutionize the way we live and work, but it is important to remember that it is not without risks. The safety layer of AI is crucial to ensuring that AI systems operate safely